Introduction:

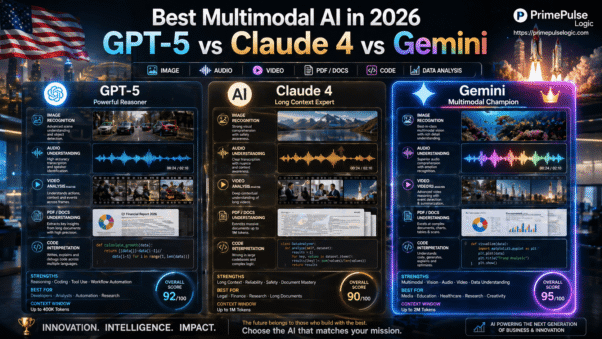

GPT-5 vs Claude 4 vs Gemini 2 in 2026 is not a simple one-winner contest. The best choice depends on what you need most, because OpenAI’s current GPT-5.5 family is pushing efficiency and coding strength, Anthropic’s Claude Opus 4.7 and Sonnet 4.6 are built for long-context work and dependable output, and Google’s Gemini 3.1 Pro is a natively multimodal model that can read text, images, audio, video, and very large files in one flow. That is the real story behind the search term GPT-5 vs Claude 4 in 2026.

Google’s live lineup has moved beyond the older “Gemini 2” label, but the searchremainsis still clear: people want the best Ael 2026 for coding, research, documents, and au in 2026tomation. OpenAI says GPT-5.5 is more efficient and gets higher-quality outputs with fewer tokens and retries, while Google describes Gemini 3 as “our most intelligent model yet.” That tells you where the market is headed.

Which AI Model Wins in 2026? Overall, GPT-5 vs Claude 4 vs Gemini 2

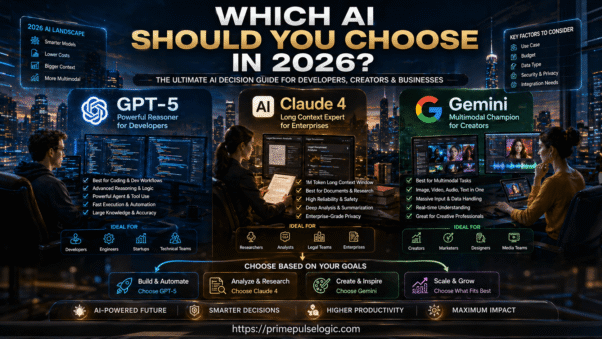

If you want one direct answer, here it is. GPT-5 is usually the strongest pick for coding and agent-heavy workflows, Claude 4 is the safer bet for long documents and careful reasoning over large context, and Gemini is the best fit when your work mixes text, images, audio, video, and large-file intake. That is the cleanest AI model comparison 2026 summary for most readers.

Quick Verdict by Use Case, coding, writing, and research

For coding, GPT-5 family models stand out because OpenAI highlights better efficiency and strong performance on coding tasks. For writing and long-form analysis, Claude is easier to trust because its 1M token context lets you keep more material in one session. For research and mixed media work, Gemini 3.1 Pro is built to process vast datasets across text, audio, images, video, and entire code repositories.

Best AI model 2026 summary for beginners

If you are new, keep it simple. Choose GPT-5 when you want a powerful general model that leans toward coding and workflow speed. Choose Claude 4 when you deal with long reports, policy text, or deep editing. Choose Gemini when your task looks messy, visual, or media-heavy. That is the easiest way to understand large language models comparison in the real world.

What Changed from GPT-4 and Claude 3 to 2026 Models

The biggest change is not just raw intelligence; it is how the models behave under pressure. GPT-5.5 is described by OpenAI as more efficient and capable of reaching higher-quality outputs with fewer retries, which matters when a model is part of a daily workflow instead of a one-off chat. Claude’s newer family keeps the long-context advantage while widening the output ceiling. Google’s Gemini 3.1 Pro raises the bar for native multimodality and large input handling.

ChatGPT 5 review, key upgrades explained

A useful ChatGPT 5 review should focus on the user experience, not just the label. The important upgrade is that GPT-5.5 works more efficiently through problems, which means fewer wasted tokens, fewer retries, and cleaner final answers. OpenAI also ties the model to higher-end pricing tiers for more serious workloads, which signals a product aimed at professional users.

Claude 4 features vs previous versions

Claude 4’s main value is still context depth and consistency. Anthropic’s current docs show Opus 4.7, Opus 4.6, and Sonnet 4.6 with a full 1M token context window at standard pricing. That is a major practical win for readers who work with contracts, research archives, source code, or long editorial drafts.

Which AI Is Best for Coding and Agent Workflows

For coding, GPT-5 family models are a strong default because OpenAI is explicitly positioning GPT-5.5 around coding performance and efficiency. That matters when you are asking a model to reason through bug fixes, API integration, refactors, or repeated prompt iterations. In an AI performance comparison, fewer retries often mean faster delivery.

GPT-5 coding performance vs Claude 4 benchmarks

A fair way to read this section is simple. GPT-5 is often the model you reach for first when you need sharp code generation and faster iteration. Claude is often the model you reach for when the codebase is large and the context is already dense. That is why the phrase best AI for coding 2026 depends on task shape, not just model fame.

Agent automation, tool use accuracy comparison.

Agent work is not just “ask and answer.” It is plan, call tools, inspect output, and adjust. OpenAI’s GPT-5.5 docs emphasize fewer retries, which points to cleaner execution in chained tasks. Claude’s model family supports very large context and batch workflows, which helps when the agent needs to carry a lot of state. Gemini 3.1 Pro is especially useful when the agent must understand multiple formats at once. That is the core of AI agent workflows in 2026.

Which Model Is Better for Reasoning and Exams

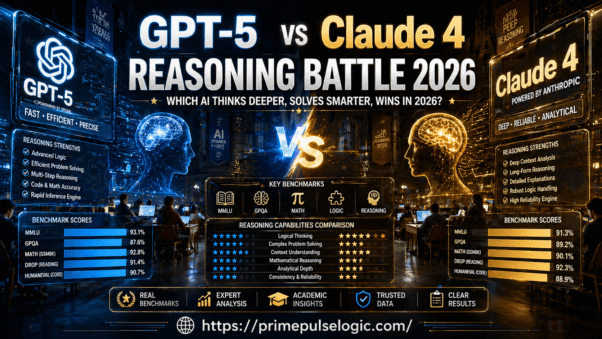

If you care about structured problem solving, you should look at official model cards and product claims before you look at hype. Google’s Gemini 3.1 Pro model card says it is designed for real-world complexity and advanced reasoning, while OpenAI emphasizes that GPT-5.5 reaches better quality with fewer retries. Anthropic’s pricing and model docs show a model family intended for sustained, serious work across long sessions. That is a solid base for AI reasoning accuracy.

MMLU, GPQA, and math reasoning comparison

Benchmark names like MMLU benchmark and GPQA reasoning test still matter, but only as signals. They help you compare general knowledge and hard reasoning, yet they do not replace real usage. A model can look great on a test and still feel clumsy in a messy project. That is why the best article does not stop at scores; it explains the workflow behind the score.

Logical thinking accuracy across models

In practice, logical thinking shows up when a model keeps its chain of reasoning clean across multiple steps. GPT-5.5 is tuned for efficient problem solving, Claude keeps a large working memory for complex instructions, and Gemini 3.1 Pro is built for adaptive reasoning across mixed inputs. That is the kind of AI reasoning accuracy readers actually feel.

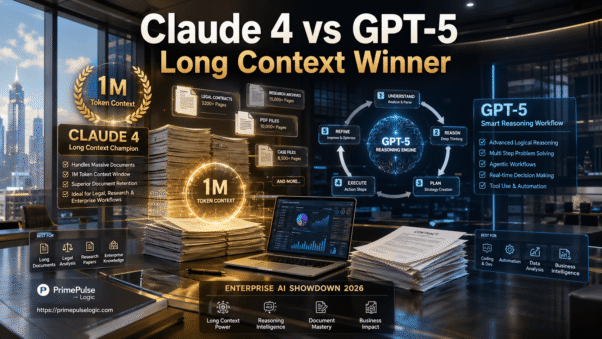

Which AI Handles Long Context and Documents Best

Claude is the clear long-context specialist in this comparison. Anthropic’s docs say Opus 4.7, Opus 4.6, and Sonnet 4.6 include the full 1M token context window at standard pricing, and that is a real advantage for analysts, lawyers, editors, and researchers. A long context window AI is not a luxury when your work lives inside huge files. It is the whole game.

Claude 4 long context performance explained.

Claude wins here because it is designed to keep more of the conversation in memory without forcing you to chop the task into tiny fragments. That helps when you compare versions of a report, inspect a large codebase, or review many pages of source material at once. For AI for long documents, that is a serious advantage.

Gemini 2 large document and PDF handling

Gemini’s live family is now Gemini 3 and 3.1, and the model card shows input support for text, images, audio, and video with a token context window up to 1M. That makes it a natural fit for PDFs, slide decks, screenshots, recordings, and mixed media research. If your workflow is document-heavy, Gemini is often the most flexible intake machine.

Which AI Wins in Multimodal Tasks in 2026

This is where Gemini has the strongest identity. Google’s official pages say Gemini 3.1 Pro can comprehend text, audio, images, video, and entire code repositories, and its model card frames it as especially well-suited for complex, real-world tasks. That is exactly what people mean when they search for multimodal AI models.

Image, video, and audio understanding test

When the input is visual or mixed, you want a model that does not panic when the data stops looking like plain text. Gemini 3.1 Pro handles those formats directly, and Google’s pricing page also shows dedicated token pricing by modality. That gives it a practical edge for teams working on media analysis, design review, and voice-based workflows.

Real use cases for multimodal AI models

Use Gemini when you need one model to read a long PDF, inspect screenshots, listen to audio, and pull insights into one output. Use Claude when the same task is mostly text, but the context is huge. Use GPT-5 when the job turns into a structured workflow or code-heavy assistant. That is the cleanest way to separate AI for automation workflows from AI for research.

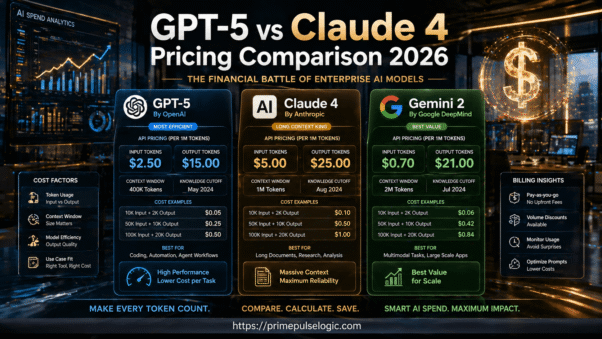

Pricing Comparison: Which AI Gives Better Value

Pricing matters because most teams do not buy a model once; they run it all day. OpenAI’s current pricing page shows GPT-5.5 at $2.50 input and $15 output per 1M tokens in the standard tier. Anthropic lists Opus 4.7 at $5 input and $25 output per MTok. Google’s Gemini pricing page shows paid tiers for Gemini 3.1 Pro and related models with separate input, output, and caching costs. That is the heart of AI pricing per token and API cost comparison.

GPT-5 vs Claude 4 vs Gemini 2 pricing breakdown

| Model family | Strength | Pricing signal | Best fit |

| GPT-5.5 | Strong coding and efficient reasoning | $2.50 input, $15 output per 1M tokens | Developers and agent workflows |

| Claude Opus 4.7 | Massive context, steady long-form work | $5 input, $25 output per MTok | Long documents and deep editing |

| Gemini 3.1 Pro | Native multimodal reasoning | Paid tier with modality-based pricing | PDFs, images, audio, video, mixed research |

OpenAI’s, Anthropic’s, and Google’s official pricing pages all show that the cheapest model is not always the best value. The best value depends on how much output you need, how long the context runs, and whether your task is text-only or multimodal. That is why AI model pricing 2026 should always be read beside the actual workload shape.

Cost per token and real usage examples

A short support chat costs one thing. A 200-page document review costs something else. A coding session with repeated tool calls costs even more. OpenAI’s docs highlight that GPT-5.5 can get higher-quality outputs with fewer retries, which can lower real cost beyond the raw token price. Anthropic and Google both expose caching and batch options, which matter a lot in high-volume work. That is the practical side of the Claude API cost and GPT-5 API pricing.

Safety, Hallucinations, and Trust Comparison

No serious buyer should ignore trust. Google’s Gemini 3.1 Pro model card says it outperforms Gemini 3 Pro across safety and tone while keeping unjustified refusals low. OpenAI focuses on improved efficiency and fewer retries, which often helps reduce messy outputs in real use. Anthropic’s current family emphasizes long-context reliability and controlled output under standard pricing tiers. That is the practical side of safe AI models and reliable AI tools.

Which AI gives more accurate answers

Accuracy is not just about giving the right final answer; it is about not drifting halfway through. GPT-5.5 is strongest when the task has structure, and the model can move cleanly through steps. Claude is strong when the source material is long, and the answer has to stay anchored. Gemini is strongest when the answer depends on many input types at once. That is where the AI hallucination rate becomes a practical question, not a theoreticalonen.

Claude’s safety vs GPT’s reasoning reliability

Claude’s long-context discipline makes it feel steady in editorial and enterprise work. GPT-5.5 feels more aggressive and efficient in reasoning-heavy work. Gemini feels broader because it can take more kinds of input in one pass. In plain English, each model is trustworthy in a different way. That is the real answer to the trust debate.

Reasons Related to the Article: Why Each AI Wins

GPT-5 wins when the task needs fast reasoning, coding support, and agent-style execution. Claude 4 wins when the job is long, dense, and sensitive to context drift. Gemini wins when the work is visual, audio-rich, or file-heavy. That is the cleanest way to think about OpenAI vs Anthropic vs Google DeepMind in one article.

Reasons GPT-5 is best for coding and agents

OpenAI’s current docs make GPT-5.5 look like a professional workhorse, not a toy. It is efficient, it reduces retries, and it is priced for serious API use. That combination is why many teams still treat GPT as the default for autonomous AI tasks and coding assistants.

Reasons Claude 4 is best for long context

Anthropic gives Claude a huge memory advantage with the 1M token context window on its top current models. For researchers, lawyers, analysts, and editors, that is often the difference between a clean workflow and a chopped-up mess. If the task is document-first, Claude usually feels calmer and more useful.

Reasons Gemini 2 is best for multimodal tasks

Even though the older “Gemini 2” keyword is still what people search, Google’s current lineup is Gemini 3 and 3.1. The live model card says Gemini 3.1 Pro understands text, audio, images, video, and code repositories with up to 1M context. That is why it remains the best answer for multimodal work.

Which AI Should You Choose in 2026

Pick GPT-5 if you build, code, automate, or need a sharp general model with strong efficiency. Pick Claude 4 if you read and write long material all day and need context discipline. Pick Gemini if your work lives across PDFs, visuals, audio, and mixed input. That is the simplest, best AI model 2026 decision tree.

Best AI for developers, creators, businesses

Developers usually get the most from GPT-5. Creators who edit long drafts often prefer Claude. Business teams that juggle media, notes, and knowledge files often get the most from Gemini. That is the short version of AI for business productivity and the best AI for coding in 2026.

Final recommendation based on real needs

Here is the honest verdict. Do not ask which model is “best” in a vacuum. Ask which one matches your daily work. If your work is code and agents, start with GPT-5. If it is a long context and careful writing, start with Claude. If it is mixed media and huge inputs, start with Gemini. That is the right answer to GPT-5 vs Claude 4 vs Gemini 2 in 2026.

FAQs:

Which AI model is best in 2026?

The best model depends on the job. GPT-5 is the strongest all-around choice for coding and agent workflows. Claude is best for long documents and deep context. Gemini is best for multimodal work, especially when you need text, images, audio, and video in one task.

Is GPT-5 better than Claude 4 for coding?

For most coding tasks, GPT-5 is the better first choice because OpenAI positions GPT-5.5 around efficient problem solving and coding strength. Claude can still be excellent for huge codebases and long-context debugging. The better answer depends on the size and shape of the code task.

Is Claude better for long documents?

Yes. Claude’s current top models support a full 1M token context window at standard pricing, which makes it easier to work with huge files, reports, and research archives. That is one of the clearest long-form advantages in the whole AI model comparison 2026.

Is Gemini best for multimodal tasks?

Yes, Gemini is the strongest fit when the input includes images, audio, video, and text together. Gemini 3.1 Pro is designed for complex multimodal reasoning and can handle a 1M token context window. That makes it a natural choice for mixed-media analysis and large file workflows.

Which AI gives the best value for money?

Value depends on the workload. GPT-5.5 is attractive for efficient reasoning and coding. Claude is strong when you need a huge context. Gemini is valuable when one model can replace several separate tools for document-heavy or multimodal work. The cheapest token price is not always the best total cost.

Final Note:

If you want more practical AI guides, deeper comparisons, and decision-focused content like this, keep following PrimePulseLogic and use the contact form to start a conversation about your content or AI strategy. The best results come when you choose the right model for the right job, not when you chase the loudest headline. PrimePulseLogic is a strong place to build that kind of clarity.